In the previous article within our metrology series we took a look into what machine vision is as a whole and how it integrates within metrology. We also made a slight distinction in what machine vision is compared to computer vision. It is important to do so as these terms sometimes get mixed together as one term, but they are not necessarily the same. In this article, we will explore the definition of computer vision, its applications, and how it relates to metrology as a whole.

Doing Fun Stuff in Computer Vision

Computer vision is an interdisciplinary scientific field that deals with how computers can be made to analyze data from digital images or videos. From the perspective of engineering, it seeks to automate tasks that the human visual system can do.

Computer vision tasks include methods for acquiring, processing, analyzing and understanding digital images, and extraction of high-dimensional data from the real world in order to produce numerical or symbolic information. This information is then used to make decisions through artificial intelligence. The transformation of visual images into descriptions of the world can interface with other thought human processes. This image comprehension can be seen as the understanding of symbolic information from image data using models constructed with the aid of geometry, physics, statistics, and learning theory. We have talked about this a bit more indepthly in terms of complex analysis and geometry previously in this series.

As a scientific discipline, computer vision is concerned with the theory behind artificial systems that extract information from images. The image data can take many forms, such as video sequences, views from multiple cameras, or multidimensional data from a medical scanner. Computer vision seeks to apply its theories and models for the construction of computer vision systems.

Some applications of computer vision include the following:

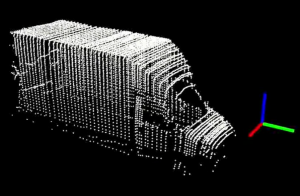

- 3D reconstruction

- Video tracking

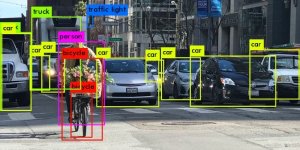

- Object recognition

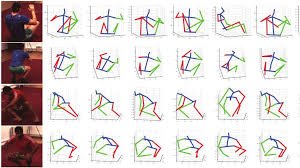

- 3D pose estimation

- Motion Estimation

- Image Restoration

3D reconstruction is the process of capturing the shape and appearance of real objects. This process can be accomplished either by active or passive methods. If the model is allowed to change its shape in time, this is referred to as non-rigid or spatio-temporal reconstruction. Spatio-temporal reconstruction refers to 4D reconstruction as it is adding the 4th element of time into creating an object (x-position, y-position, z-position, and time).

Video tracking is the process of locating a moving object (or multiple objects) over time using a camera. It has many uses, some of which include: human-computer interaction, security and surveillance, video communication and compression, augmented reality, traffic control, medical imaging, and video editing. Video tracking is time consuming due to the amount of data that is contained in a video. The need for object recognition techniques in video tracking is very difficult as well.

Object recognition technology in the field of computer vision is used for finding and identifying objects in an image or video sequence. Humans have the ability to recognize a large amounts of objects in images with a lack of effort. We are able to do this despite the fact that the image of the objects may vary somewhat in different viewpoints, in many different sizes and scales, or even when they are translated or rotated. Objects can even be recognized when they are partially hidden from view. This task is still a challenge for computer vision systems. Many approaches to the task have been implemented over multiple decades.

3D pose estimation is the problem of determining the transformation of an object in a 2D image which creates a 3D object. One of the requirements of 3D pose estimation comes from the limitations of feature-based pose estimation. There exist environments where it is difficult to extract corners or edges from an image. To deal with these issues, the object is represented as a whole through the use of free-form contours.

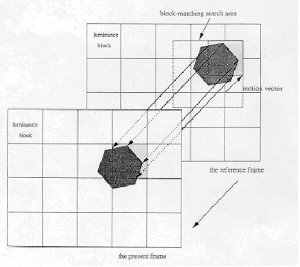

Motion estimation is the process of determining motion vectors that describe a transformation from one 2D image to another; usually from adjacent frames in a video sequence. There lies a problem as the motion is in three dimensions but the images are a projection of the 3D scene onto a 2D plane. The motion vectors may relate to the whole image or specific parts, such as rectangular blocks, arbitrary shaped patches or pixels. The motion vectors may be represented by a translational model or many other models that can approximate the motion of a real video camera, such as rotation and translation in all three dimensions and zoom.

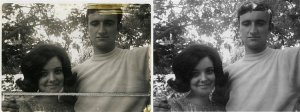

Image Restoration is the operation of taking a corrupt/noisy image and estimating the clean, original image. Corruption may come in many forms such as motion blur, noise and camera mis-focus. Image restoration is different from image enhancement in that the latter is designed to emphasize features of the image that make the image more pleasing to the observer, but not necessarily to produce realistic data from a scientific point of view. Image enhancement is when one wants to use software such as Adobe Photoshop or Adobe LightRoom. With image enhancement noise can effectively be removed by sacrificing some resolution, but this is not acceptable in many applications.

Within our next articles we will be looking indepthly into the previously outlined topics and relate them to the field of metrology as a whole.

The post What is Metrology Part 11: Computer Vision appeared first on 3DPrint.com | The Voice of 3D Printing / Additive Manufacturing.

31 Replies to “What is Metrology Part 11: Computer Vision”

Comments are closed.